The Lumos Hardware

Replacing Complex Optics with Nanophotonics

The Hardware-Software Co-Design

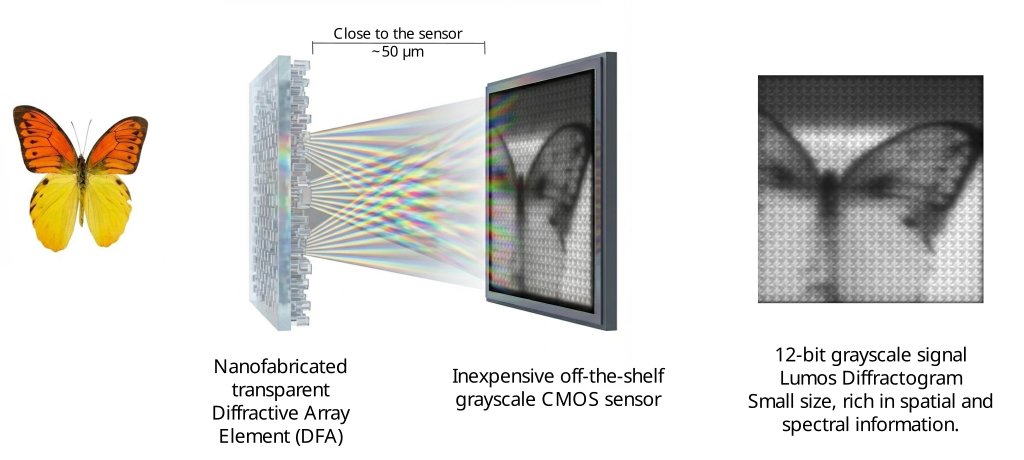

To break the barriers of cost and complexity, we didn’t just shrink a traditional spectrometer. We reimagined the physics of imaging.

Lumos employs a Computational Imaging approach. We shift the burden of complexity from bulky hardware (prisms, mirrors, moving parts) to sophisticated software. This allows us to use simple, robust, and scalable components to achieve performance that was previously impossible at this price point.

System Architecture: A Drop-In Revolution

The Lumos camera looks, acts, and connects just like a standard machine vision camera. There are no slit-scanners, no heavy gimbals, and no fragile alignment mechanisms.

The physical stack consists of three layers:

- Standard Optics: Compatible with off-the-shelf C-Mount lenses.

- The Diffractive Filter Array (DFA): Our core IP. A transparent, nanofabricated optical element bonded directly to the sensor.

- Commodity Sensors: We leverage the massive R&D economies of the mobile phone and industrial sensor markets.

- Vis-NIR: Standard high-resolution CMOS sensors (e.g., Sony IMX).

- SWIR: InGaAs sensors for industrial sorting.

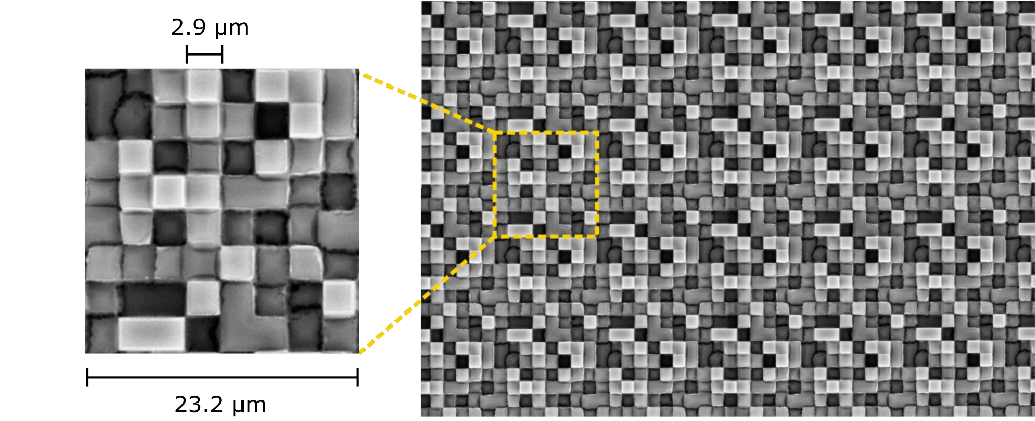

Innovative Wafer-Level Nanofabrication

Traditional hyperspectral cameras are expensive because they are built like watches: precision glass components, hand-assembled and aligned.

Lumos cameras are built like microchips.

We utilize Nano-Imprint Lithography (NIL) to fabricate the Diffractive Filter Arrays.

- Scalability: We print optics on standard wafers. Thousands of DFAs are produced in a single step.

- Cost: This semiconductor-based approach drives the unit cost down by orders of magnitude compared to traditional interference filters or prisms.

- Robustness: The DFA is a solid-state surface relief structure. It is immune to vibration and thermal drift.

Breaking the Resolution Limit (True HD)

One of the greatest compromises in snapshot spectral imaging has always been resolution. Traditional “Mosaic” cameras (using pixel-level filters) trade spatial resolution for spectral bands, often resulting in grainy, low-fidelity images (e.g., \(400 \times 400\) pixels).

Lumos delivers True High Definition.

Because our diffractive encoding is continuous and distributed, we preserve high-frequency spatial details that other systems lose. As validated in our recent Optica publication, we achieve:

- ~1 Megapixel Resolution: \(1304 \times 744\) spatial pixels.

- Full Spectral Context: 25+ bands across the Vis-NIR range.

This allows Lumos sensors to be used in applications requiring fine detail, such as detecting small defects on a production line or identifying features from high-altitude drones.

Not All Snapshot Sensors Are Equal

Some approaches use Fabry-Pérot (Interference/Resonance) or Extended Bayer (Absorption) architectures. While these are “snapshot” capable, they have significant differences:

- Sensitivity: Absorptive filters block most of the light (often >90%), performing poorly in low light.

- Tunnel Vision: Fabry-Pérot filters rely on resonance. If light enters at an angle, the color shifts (blue shift). This restricts them to very narrow Fields of View (FOV) and requires telecentric lenses.

- Manufacturing Risk: Resonance structures require nanometer-level tolerances, making them expensive and difficult to yield.

- Cost: These systems are typically expensive to build and maintain.

- Resolution: These systems are typically limited to a few hundred pixels.

Lumos uses Diffraction (Phase Modulation). Our optics are transparent (high sensitivity), work at wide angles (large FOV), and use robust micro-scale features that are easy to manufacture.

Technology Comparison with a Few Alternatives

| Feature | Lumos (Diffractive) | Fabry-Pérot (e.g. IMEC) | Extended Bayer (5x5) |

|---|---|---|---|

| Physics | Phase Modulation (Transparent) | Resonance (Interference) | Amplitude (Absorption) |

| Sensitivity | High (≥90% photons reach sensor) | Medium (Bandwidth limited) | Low (~1/25th of photons) |

| Field of View | Large (Standard Lenses) | Small (Angle Sensitive) | Large |

| Fabrication | Robust (Micron-scale features) | Difficult (Nanometer tolerance) | Complex (Multi-layer pigment) |

The Result: The Diffractogram

The output of our system is not a large 3D data cube as most hyperspectral systems do. It is a Diffractogram—a raw, optically compressed signal that contains the full complexity of the scene in a highly efficient format.

This signal is the key to our unique approach to spectral imaging.